On June 30th, 2017, ISE Fleet Services registered a certified compliant ELD (Electronic Logging Device) with the FMCSA (Federal Motor Carrier Safety Administration). Our journey to reach this milestone was long and arduous… and was also a series of lessons in applying an Agile mindset. In this blog post, I share what I see as some of the key lessons from our journey…

The Context

In the United States, many drivers of commercial vehicles fall under federal Hours of Service rules that limit the amount of time drivers can drive, and require the drivers to keep log books of their activities so as to be able to demonstrate compliance against the limits. These rules are intended to make our roads safer by reducing driving while fatigued. For more than 20 years, use of electronic logs has been optional, and most drivers have used paper logs. By December 18, 2017, use of electronic logs – along with a new set of regulations for ELDs (Electronic Logging Devices) – is mandatory*.

(* This article is not specifically about ELD compliance. If you are curious about exact ELD compliance information, see https://eld.fmcsa.dot.gov/).

As a business, our challenge was to update our existing electronic logging system to comply with the new ELD rules, and to self-certify our system early enough to provide our customers and partners with confidence that purchasing our system would be a wise decision.

Getting started in the face of uncertainty

After the new rules were finalized in December 2015, we updated our analysis of impacts to our system and went through an initial round of estimating. The initial estimates were not too alarming. We were in the tail end of a large functional update to our system, so we went on to complete and roll out that update. We then structured a team around the ELD work (with other teams handling general enhancements / maintenance and partner integrations), and got started.

Getting started was difficult, as the initial estimate team had not been trained in agile estimation and planning methods, but we were conducting the project in an agile manner. Thus we did not start with a full backlog of the work to accomplish. What we did do was start breaking the work down into user stories and vertical slices, looking for ways we could make progress even though the big picture was still unclear. We also incorporated some infrastructure updates and technical debt reduction into this initial work, so that we would be well positioned to run more smoothly over time.

In my view, this “getting started in the face of uncertainty” turned out to have a number of advantages:

- We were able to make valuable progress

- We were able to establish a team velocity – this became important later as we did get to the big picture view

- We were able to maintain and evolve a “done-ness” definition that ensured that completed stories were done-done and that our per-release regression testing was against a high quality baseline

- We did not get stuck in “analysis paralysis”, waiting for a complete analysis before making progress

- We built valuable experience in interpreting the government’s rules and envisioning what these meant for our users, our integration partners, and our product

- We gained a good sense for the issues that would be our major impediments – issues we would have to tackle in order to make progress

- We developed a successful cross-team working relationship that allowed the other teams to also deliver value

- We gained experience with the new rules and context that helped us develop the big picture

Agile takeaways:

- Useful progress can be made, and useful learning can be gained, by getting started, even if the big picture is not clear

- When estimating and planning a large project, experienced agilists can help guide a team to creating an effective agile plan

Getting to the big picture

We made several months of valuable progress… but the big picture still eluded us. Leadership had set a target release date, but we could not see whether our rate of progress would get us there or not. We had many choices as to the levels of non-essential functionality we would include along the way – but how ruthless did we need to be in focusing on the essentials? It was time to get to the big picture.

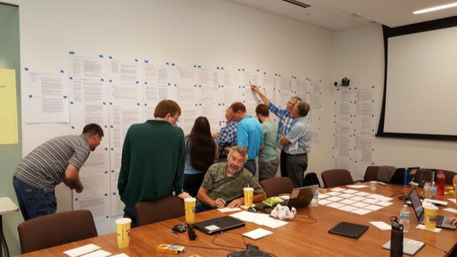

We conducted a two day planning workshop, which was heavily planned and facilitated in order to get us to that big picture. Key elements of this workshop:

- Participation from business, engineering, and customer service

- A story writing activity, where we mapped the ELD rules to user stories, and also captured user stories for useful enhancements that we wanted to fold into the product along the way

- A prioritization activity, where we ranked user stories by the value they would deliver

- An estimation activity, where we assigned story point estimates to each story

- A release planning activity, where we partitioned the work into likely releases: what can we deliver early to provide value?

- An analysis and next steps activity, where we drew conclusions and decided on next steps

|

| Deriving user stories from the regulatory text. There’s another wall of text opposite this one! |

|

| Using The Estimation Game to rank stories by value |

The planning workshop gave us a big picture view of the work left to do… about 200 user stories… and how long it would take to do everything we wanted… about 3 years! This was not a good answer… we did not have 3 years. But it was a useful answer. The radical transparency of looking at the work and the likely rate of progress gave us what we needed so that we could start making hard decisions. That user interface overhaul that would polish the user experience and fix some bugs? Off the table. A revamp of the test automation system? Off the table. A focus on what would give us a compliant and usable product? Razor sharp.

The planning workshop gave us other gifts:

- A clear alignment and sense of “we’re in this together” across the engineering, business, and customer service teams. This alignment was sustained throughout the project.

- A business-level appreciation for the effort and complexity – and sheer magnitude – of the work ahead. (A stack of 200 story cards tells its own story.)

- A shared understanding that our plan was probably 80% of what was needed – that there would be additional discovery along the way

- The basis for a build-up chart that would show us visually our progress against the remaining work

Agile takeaways:

- Involving cross-functional participants in a planning workshop builds alignment

- A “good enough” plan (what’s all the work we can think of?) is valuable, and can be created in a short period of time

- Even an initial estimated backlog and build-up can help provide critical focus on what is important/valuable – helping drive even hard decisions about much-cherished features of lesser value

- An established team with an established velocity for the work being done provides realistic information about the rate of subsequent work

- Using “already estimated and done” stories as reference points helps calibrate estimation activities such as the Team Estimation Game

- Facilitation matters: planning and conducting this workshop according to learning from Agile Coaching Institute’s “The Agile Facilitator” helped ensure that our two days were focused, productive, and valuable

- Face-to-face participation was extremely valuable; as you can see in the photographs, we made use of wall space and table space to make sure that all of the information was visible all of the time

- Leadership response to transparency is critical and very telling. Our leadership response was a healthy one of “what can we all do to meet this challenge?” and not one of denial or “shoot the messenger”.

A strategic dilemma… we were wrestling with a number of overlapping and competing objectives, including:

- The product must be ready in time for customers to meet regulatory compliance dates

- We want the product at a compliant state much earlier, as this means we can join the list of registered providers, and so more customers can feel comfortable choosing our solution before the compliance date

- We want the product at a compliance state early so that our integration partners can complete their integration and also register their systems; we want to deliver select features early to aid partner integration

- We want to complete a series of functional enhancements that help our product and our partners’ products serve a larger customer base

- We don’t have unlimited investment funds to scale the teams doing the work

Our agile approach and big picture planning did not resolve the strategic dilemma for us. However, it did provide us with data to help us make decisions to balance these objectives.

It’s too big. What do we do now?

With the critical information of size / build-up from our planning workshop, the engineering / product team went through a planning refinement exercise. This exercise had two key focal points:

- Make the work smaller

- Go faster

Making the work smaller:

This was a detailed “scrub” of the backlog that we had generated. For each story, we considered:

- What part of this is essential, and what part is optional? Where can we draw a line between creating something compliant and convenient vs. fully streamlined and very polished? Can we define what’s in our MVP vs. post-MVP “make it better later”?

- What similarity or shared infrastructure exists when we look at other user stories? Are there any orderings or groupings that would help streamline the work?

- What questions do we have (that lead to large estimates due to uncertainty or risk)? What clarification work can we do early that will help reduce that risk and uncertainty?

Go faster:

This was a detailed look at our experience as a team so far and our technical practices. While our retrospectives had been revealing impediments and opportunities, we took this opportunity to take a fresh look. We chose a number of improvement actions, and speculated on the range of possible velocity improvements that we might get out of these.

We came out of this activity with:

- A refined backlog, partitioned into value-providing releases up to an MVP (launchable product) along with a list of key enhancements that we could take on after that launch

- A set of improvement actions, including recommendations to leadership for actions that were outside the team’s authority

- A speculative velocity range (here’s how fast we might go)

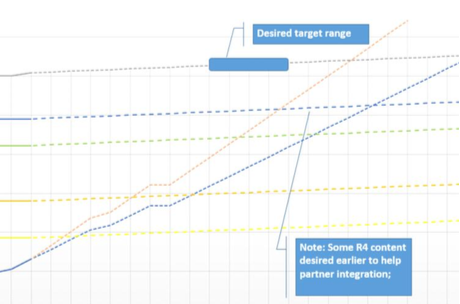

- A speculative build-up chart, showing possible completion dates; if we met our velocity aspirations, we would be later than we wanted but still in the game

- A team mindset of challenging every backlog item and every acceptance criteria: What do we really need to do here? What can we not do, or do later?

|

| Initial build-up chart |

From this basis, we dived back in to the work, as well as implementing the improvement actions. What we learned over time is that our “improved velocity” speculations were optimistic. In our best sprints over the remainder of the project, we managed to hit the mid-range of our aspirational velocity, but our consistent average was lower than our low end of speculated improvement. One mistake I made in maintaining our build-up chart was showing the build-up based on the aspirational velocity range for too long, which meant we were making decisions based on optimistic information rather than actual information. We did, in the end, take into account the actual rate of progress and update our stakeholders with a new target date.

Agile takeaways:

- Advertising a build-up based on aspirational velocity is tempting. Don’t succumb. It delays reality-based decision making.

- Having team alignment and mindset around “what’s of value” (in this case, balancing compliance, convenience, early partner integration, and time-to-market) helps drive conversations of simplicity and focus. What can we do now that will deliver sufficient value, and improve later?

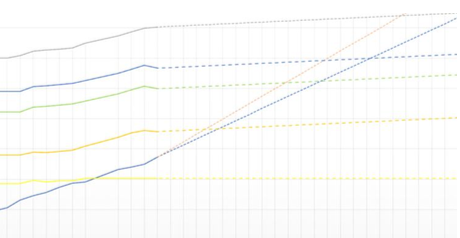

Allowing for discovery

Looking at the earlier build-up chart, you’ll notice that the target release lines are not horizontal (fixed scope) but have an upward slope, representing an allowance for discovery: what work have we not predicted? A later build-up chart (below) reveals that the actual rate of discovery was significantly higher… in fact in some sprints, the target moved almost as fast as the team!

|

| A later build-up chart |

A significant part of our discovery was the many unanticipated impacts to the existing product driven by the data model changes we had adopted to satisfy the new ELD requirements. These were compounded by our backward compatibility approach, which would allow users of our existing products to seamlessly continue to use those products and upgrade to ELD functionality on their own schedule. One thing we did really well was continuously manage the backlog, looking at each new item in the context of where it fit with our goals for each release and the overall project.

Agile takeaways:

- In a project with any complexity, doing the work teaches us more about what the work is

- It is difficult to predict how much discovery (scope growth) to allow for

- Having a goal/purpose for each release eases continuous backlog management, because “where things go” is clearer

Technical debt and infrastructure improvement: a dilemma

On any project there is a tension between spending time “adding functionality” and “improving what we have so we can go faster”. Agile technical approaches such as Clean Code promote sustainable development by building in the cleanup and improvement along the way. As we started out on the ELD effort, some of the early work identified was infrastructure improvements to ease maintenance and development over the long term. As the big picture became clear, a sense of time-urgency emerged, tipping the balance towards “adding functionality” and away from “improving what we have so we can go faster”. As a team, we had to make a number of difficult choices: here’s a clear improvement that we could make that will help us go faster in the long run, but we think it will slow us down between now and our ELD target release – what shall we do? For the most part, we chose to defer the improvements. It means that we will have some additional work ahead of us (some of which has already been done) for long-term improvement.

Agile takeaways:

- In striving for sustainable development, context matters. In a time-to-market situation, we may choose to defer some improvements.

- Keeping an eye out for “what’s sustainable” is an important skill and task. For example, if a particular test framework/approach shows early signs of not being sustainable (longer and longer test run times, many intermittent failures), changing approach is much easier before there is an extensive body of tests using the unsustainable approach.

The final push

The core ELD team continued to make solid and steady progress. We made two releases with solid deliveries and high quality. The other teams completed significant feature deliveries. Our progress was solid but not enough to meet the target date. With a clear view of reality and the strategic dilemma, we adjusted course:

- We adjusted release content (again) so that we could meet compliance and partner integration first (prioritizing time to market) and then quickly follow up with additional convenience features – which would be available before end users needed them

- We added teams: both the existing product teams that had been working enhancements, maintenance and integration, as well as borrowed teams from our professional services arm

- In the last weeks, we asked individuals to put in the extra effort to get us to target, and provided additional support (e.g. dinner brought in a couple of nights a week)

At this point, many of you are thinking that we are tempting Brooks’ Law: “adding manpower to a late software project makes it later”. Indeed, scaling from one team to five was a challenging task… our challenges included:

- Supporting team members with limited or no experience in this product / codebase while limiting the impact to work getting done

- Keeping the build and core test automation scenarios green and clean

- Effectively dividing and coordinating work across five teams

- Keeping quality high as multiple teams impacted the “most busy” parts of the codebase

- Managing the size of code reviews and merges

- Recovering from an accidental wrong-way merge

Fortunately, the two existing product teams were already integrated into the workflow, and one of the other teams had prior experience with the product codebase. Only one team was at a large disadvantage, and we assigned this team the work that could be made most modular. Could some things have been improved? Of course. But there’s a lot that went well. Here are some of the things I observed:

- Cross-team code reviews, especially for code from the less-experienced teams, helped reduce the impact of code changes made without deep knowledge of the product and codebase

- A cross-team PO (with technical help from the teams) coordinating the assignment of work to the teams so as to reduce overlap and collisions and make good use of teams’ specific areas of expertise

- Team members who stepped up to jump on broken builds and tests and get us back to “green” helped ensure a smooth flow across all the teams

- A deep level of quality ownership, where team members detected and helped troubleshoot bugs introduced by other teams

- Visible commitment, support and appreciation from leadership, fostering the sense of “we’re all in this together”

- A lot of cross-team communication, enabling increased understanding and reducing the number of blind alleys and bugs introduced

- Fast responses to cross-team communication channels (Slack) helped reduce broken build time and delays due to the need for information

Did Brooks’ Law apply in our case? Yes and no. We were early enough adding teams, and had enough depth across the teams, that we were able to make our certification target. We certainly expended a larger effort getting there (an efficiency-for-time tradeoff) and left ourselves with a bit more cleanup (the regression / release cycle was longer than for previous releases). We made our target and there was an enormous sense of shared pride in the achievement.

Agile takeaways:

- Adding teams adds complexity (surprise!)

- Having an appropriate planning horizon is important to project success. Agile projects can often plan only the “next release”. Faced with a certain set of knowns (new regulations, compliance dates) and goals (time-to-market), we needed to keep enough plan to see the big picture. We still deferred much of the user story refinement (keeping user stories as “placeholders for conversations” until the time neared to bring them into sprints), but we had to invest enough effort in backlog grooming and story estimation so that the build-up chart reflected “good enough” knowledge to enable decisions – such as when to bring on extra teams.

- Fostering a shared goal and sense of “we’re in this together” helps enable cross-team coordination. Seeing the big picture and being able to reach out across team boundaries to understand how a team’s work folds into the larger architecture and codebase makes a big difference when integrating new teams into an ongoing project.

Celebration, and what’s next?

On June 30th, 2017, ISE Fleet Services registered a certified compliant ELD (Electronic Logging Device) with the FMCSA (Federal Motor Carrier Safety Administration). We are proud of the accomplishment, and of the dedication and teamwork that got us here. We have learned a lot along the way that will make us better over time. And our work is not done: we now have improvements and polish to add… what’s the next most valuable thing we can do?